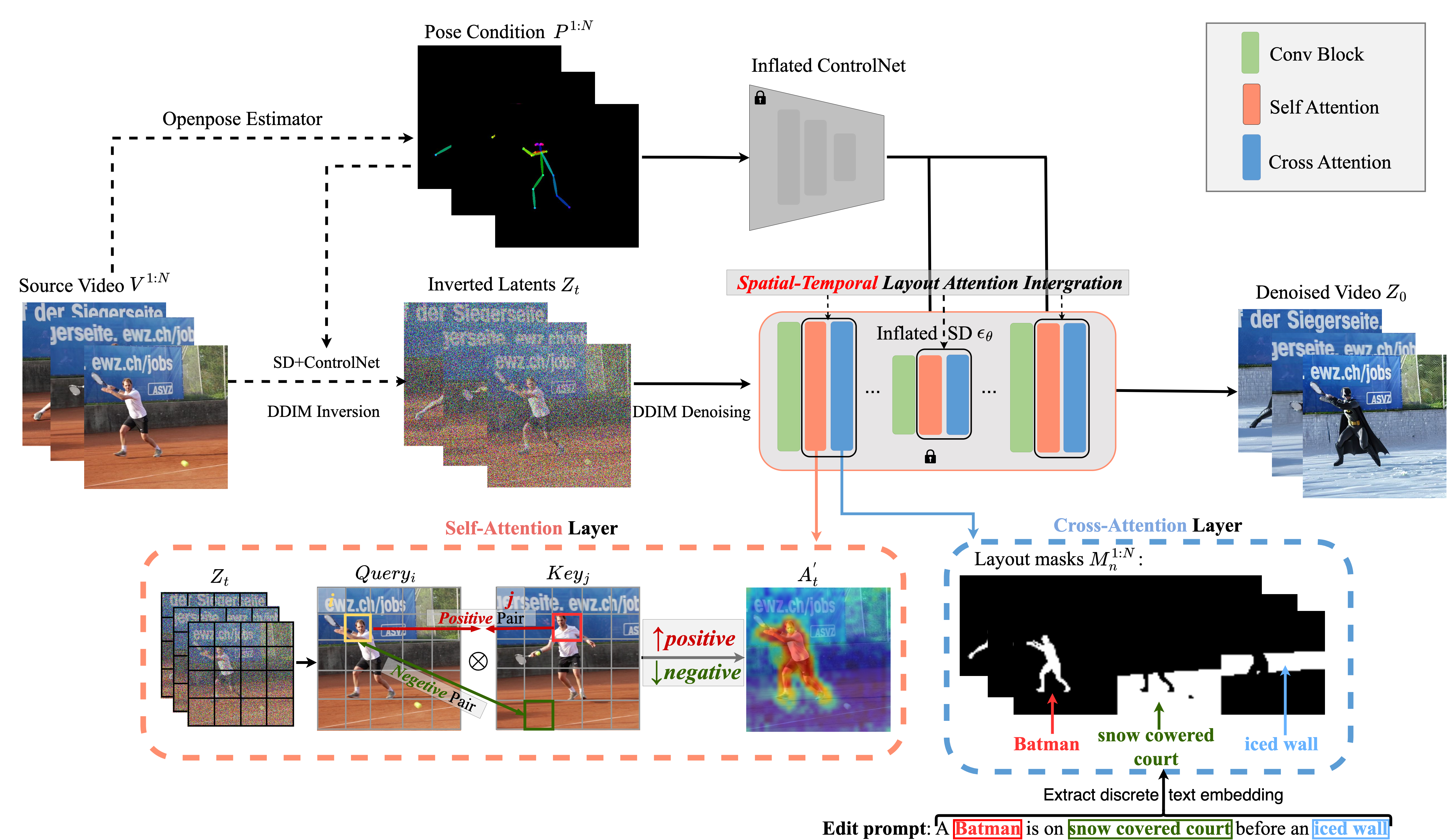

Method

- Maximizing the cosine cross-frame DIFT similarity to identify its corresponding positive pair in other frames (sharing the same attribute).

- Minimizing this similarity to find its negative pair (across different attributes).

|

We integrate the ST-Layout Attn within the frozen SD in the denoising

process. In the self-attention layer, we compute the positive/negative value of each query token in

different attributes from a spatial-temporal perspective, This allows us to augment the attention

scores for tokens within the same attribute and reduce them for tokens in different attributes.

In the cross-attention layer, we extract each attribute’s text embeddings from the edit prompt,

ensuring they focus only on corresponding layouts across frames. |

EVA Editing Results (Click for More Results)

| man → Batman, clay court → snow covered court, stone wall -> an iced wall | man → Ironman, ground → grassland | ||

|---|---|---|---|

| girl → Batwoman, ground → snow covered ground | woman → Scarlet Witch, sofa → a moonlit pond, background → starry dark night | ||

|---|---|---|---|

|

left man→ Iron Man, right man → Spider Man trees, ground → frosty yellow leaves |

back man→ Iron Man, front man → Batman bridge, ground → snow covered |

||

|---|---|---|---|

|

left man→ Iron Man, right man → Batman treess→ crimson maple trees, road → snow covered road |

man→ Batman, woman → Batwoman ground → rain soaked ground, red wall → stormy lighting night |

||

|---|---|---|---|

|

background→frosty yellow leaves, → sphalt road with building under sky |

left man→ Iron Man, right man → Spider-Man |

left man→ Spider-Man, right man → Iron Man |

wall → charcoal grey wall | man→ Spider-Man, woman→ Wonder Woman |

man→ Wonder Woman, woman → Spider-Man |

|---|---|---|---|---|---|

| ground → grassland , blue sky → raining sky |

left man→ Iron Man, right man → Spider-Man |

left man→ Spider-Man, right man → Iron Man |

store → cyberpunk cityspace | left man→ Iron Man, right man→ Hulk |

left man→ Hulk, right man → Iron Man |

|---|---|---|---|---|---|